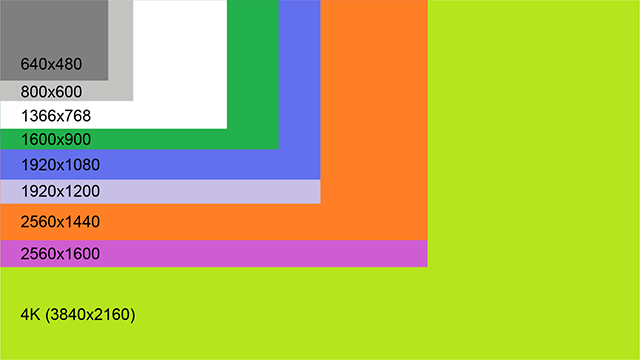

The page size is selected by the operating system for maximum performance. Memory mapped files are loaded into memory one entire page at a time. The memory mapping process is handled by the virtual memory manager, which is the same subsystem responsible for dealing with the page file. Memory-mapping may not only bypass the page file completely, but also allow smaller page-sized sections to be loaded as data is being edited, similarly to demand paging used for programs. Trying to load the entire contents of a file that is significantly larger than the amount of memory available can cause severe thrashing as the operating system reads from disk into memory and simultaneously writes pages from memory back to disk. Since the memory-mapped file is handled internally in pages, linear file access (as seen, for example, in flat file data storage or configuration files) requires disk access only when a new page boundary is crossed, and can write larger sections of the file to disk in a single operation.Ī possible benefit of memory-mapped files is a "lazy loading", thus using small amounts of RAM even for a very large file. Applications can access and update data in the file directly and in-place, as opposed to seeking from the start of the file or rewriting the entire edited contents to a temporary location. Secondly, in most operating systems the memory region mapped actually is the kernel's page cache (file cache), meaning that no copies need to be created in user space.Ĭertain application-level memory-mapped file operations also perform better than their physical file counterparts. Firstly, a system call is orders of magnitude slower than a simple change to a program's local memory. Accessing memory mapped files is faster than using direct read and write operations for two reasons. Therefore, a 5 KiB file will allocate 8 KiB and thus 3 KiB are wasted. For small files, memory-mapped files can result in a waste of slack space as memory maps are always aligned to the page size, which is mostly 4 KiB. The benefit of memory mapping a file is increasing I/O performance, especially when used on large files. Use of GMMF requiresĭeclaring the maximum to which the file size can grow, but no unused space is wasted.

Since " CreateFileMapping function requires a size to be passed to it" and alteringĪ file's size is not readily accommodated, a GMMF API was developed. Two decades after the release of TOPS-20's PMAP, Windows NT was given Growable Memory-Mapped Files (GMMF). SunOS 4 introduced Unix's mmap, which permitted programs "to map files into memory." Windows Growable Memory-Mapped Files (GMMF)

1969) implementation of this was the PMAP system call on the DEC-20's TOPS-20 operating system, a feature used by Software House's System-1022 database system. Once present, this correlation between the file and the memory space permits applications to treat the mapped portion as if it were primary memory.Īn early ( c. This resource is typically a file that is physically present on disk, but can also be a device, shared memory object, or other resource that the operating system can reference through a file descriptor. Not to be confused with Memory-mapped I/O.Ī memory-mapped file is a segment of virtual memory that has been assigned a direct byte-for-byte correlation with some portion of a file or file-like resource.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed